iPhone accessibility represents one of technology’s most remarkable achievements in creating truly inclusive digital experiences. Apple’s commitment to universal design has transformed how millions of users interact with their devices, breaking down barriers that once seemed insurmountable. The accessibility features built into every iPhone extend far beyond simple accommodations, creating powerful tools that enhance usability for users across the entire spectrum of abilities and preferences.

Understanding iPhone accessibility requires recognizing that these features serve dual purposes. While primarily designed to assist users with specific needs related to vision, hearing, motor skills, or cognitive function, many accessibility features provide benefits that appeal to all users. The comprehensive nature of iOS accessibility means that whether you’re seeking to accommodate a specific disability, enhance productivity, or simply discover new ways to interact with your device, the accessibility suite offers solutions that can transform your iPhone experience.

The philosophy behind iPhone accessibility centers on the principle that technology should adapt to users rather than forcing users to adapt to technology. This approach has resulted in features that integrate seamlessly with the operating system, providing assistance without compromising the elegance or functionality that defines the iPhone experience. From the moment you power on an iPhone, accessibility options are available, ensuring that setup and initial configuration remain accessible to users with diverse needs.

Modern iPhone accessibility encompasses four primary categories of assistance: vision support for users with blindness, low vision, or color vision differences; hearing support for users who are deaf or hard of hearing; motor support for users with limited mobility or dexterity challenges; and cognitive support for users with learning differences or attention difficulties. Each category contains multiple sophisticated features that work independently or in combination to create customized accessibility solutions.

Vision Accessibility: Beyond Sight

VoiceOver stands as perhaps the most sophisticated screen reader ever integrated into a consumer device. This technology transforms the visual iPhone interface into an entirely audio-based experience, enabling users who are blind or have severe vision impairments to navigate every aspect of their device with remarkable precision. VoiceOver accomplishes this transformation by providing detailed audio descriptions of every element on screen, from app icons and buttons to complex interface components and even image contents.

The genius of VoiceOver lies not merely in its ability to speak text, but in its comprehensive understanding of interface context. When navigating through applications, VoiceOver provides information about element types, states, and relationships that sighted users gather visually. The system recognizes when elements are buttons, links, headings, or form fields, announcing this information alongside the element’s content. This contextual awareness enables VoiceOver users to understand not just what appears on screen, but how interface elements function and relate to one another.

VoiceOver’s gesture system replaces traditional touch interactions with a sophisticated set of finger movements that provide complete device control. Single taps select elements, double taps activate them, and multi-finger gestures provide navigation shortcuts and advanced functionality. The learning curve for VoiceOver gestures might seem steep initially, but the system’s internal training mode provides guided instruction that helps users master these interactions progressively.

Zoom functionality addresses the needs of users with low vision by providing magnification capabilities that extend far beyond simple text enlargement. The Zoom feature can magnify the entire screen up to fifteen times normal size, with options for full-screen magnification or windowed zoom that affects only specific screen regions. Advanced zoom options include the ability to follow text input, invert colors within the zoomed area, and apply custom filters that enhance visibility for users with specific vision conditions.

Display accommodations represent a sophisticated suite of visual modifications that address various vision-related needs. Smart Invert creates a dark interface that maintains the natural appearance of images and photos while inverting text and interface elements. Color filters assist users with color blindness by adjusting the display to enhance color differentiation, while options for reducing white point and increasing contrast help users who experience discomfort from bright displays or require higher contrast for clear visibility.

The Magnifier feature transforms the iPhone camera into a powerful magnifying glass, providing up to fifteen times magnification for real-world objects. This tool proves invaluable for reading small print, examining detailed objects, or navigating environments where visual detail matters. Advanced Magnifier options include freeze frame capability for examining moving objects, multiple lighting options, and color filters that can enhance visibility of specific objects or text.

Spoken Content features bridge the gap between visual and auditory information processing. Speak Selection allows users to have any selected text read aloud, while Speak Screen provides complete audio rendering of entire screen contents. These features support users with dyslexia, vision fatigue, or learning differences while also proving useful for multitasking scenarios where audio consumption of written content enhances productivity.

Motor and Physical Accessibility: Redefining Interaction

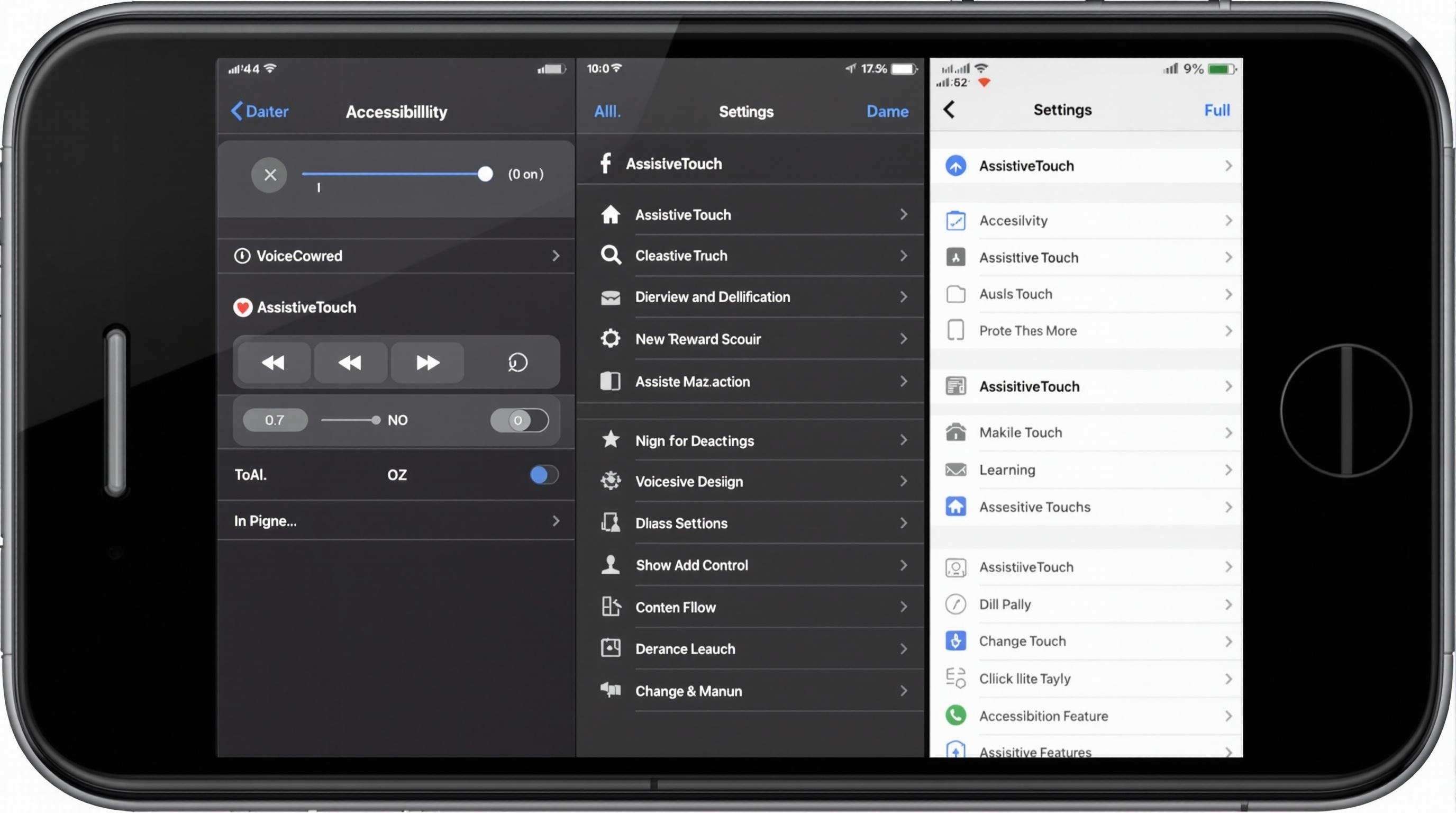

AssistiveTouch revolutionizes iPhone interaction for users with motor impairments by creating a customizable virtual interface that replaces physical button presses and complex gestures. This floating menu system can be positioned anywhere on screen and configured to perform virtually any iPhone function through simple taps. Users can create custom gestures, record complex multi-touch interactions, and access device functions that would otherwise require physical button combinations or difficult finger movements.

The power of AssistiveTouch extends beyond basic functionality replacement. Advanced users can create sophisticated automation sequences that combine multiple actions into single menu selections. This capability proves particularly valuable for users who experience fatigue or pain from repetitive motions, as complex workflows can be condensed into simple single-tap operations. The menu structure itself adapts to user preferences, with options for nested menus that organize functions logically while maintaining easy access to frequently used features.

Switch Control represents the pinnacle of alternative input methods, enabling iPhone operation through external switches, head movements, or other adaptive input devices. This system transforms the iPhone interface into a scanning environment where elements are highlighted sequentially, allowing users to make selections through single-switch activation. Advanced Switch Control configurations support multiple switches for more sophisticated navigation patterns, while timing options accommodate users with varying response speeds.

Touch Accommodations address the reality that not all users can interact with touchscreens in the precise manner that standard interfaces expect. These features adjust how the system interprets touch input, accommodating users whose finger movements may be imprecise, who experience tremors, or who require longer contact times for reliable activation. Hold Duration settings allow users to customize how long touches must be maintained before activation, while Ignore Repeat prevents accidental multiple activations from users who cannot immediately lift their finger after touching.

Back Tap functionality transforms the iPhone’s rear surface into an input area, enabling users to perform various actions through gentle taps on the device back. This feature particularly benefits users who find reaching certain screen areas difficult or who prefer alternative activation methods. The system can distinguish between double and triple taps, allowing users to assign different functions to each pattern while maintaining sensitivity levels that accommodate various motor abilities.

Reachability addresses the challenge of using large-screen iPhones with limited hand mobility by shifting the entire interface downward when activated. This simple but effective feature brings all screen elements within reach of users who cannot comfortably extend their thumb across the full display area, whether due to hand size, strength limitations, or range of motion restrictions.

Voice Control provides comprehensive iPhone operation through spoken commands, eliminating the need for touch interaction entirely. This system understands natural language commands for navigation, text input, and application control, while also supporting numbered overlays that allow precise selection of any screen element. Advanced Voice Control features include custom vocabulary recognition, contextual command adaptation, and the ability to create complex command sequences that automate frequently performed tasks.

Hearing and Audio Accessibility: Sound Without Barriers

Hearing Aid Compatibility transforms the iPhone into a sophisticated audio processing device for users with hearing aids. Made for iPhone hearing aids connect directly to the device via specialized Bluetooth protocols, providing clear audio streaming while maintaining optimal battery efficiency. The system automatically adjusts audio routing, volume levels, and processing characteristics to optimize sound quality for each user’s specific hearing aid configuration and hearing loss pattern.

Live Listen functionality converts the iPhone into an assistive listening device by using the device microphone to capture nearby sounds and transmit them directly to connected hearing aids or headphones. This feature proves invaluable in challenging acoustic environments like restaurants, lecture halls, or social gatherings where background noise interferes with conversation comprehension. The system provides adjustable sensitivity and noise filtering to optimize audio clarity for various environmental conditions.

Sound Recognition technology enables the iPhone to monitor environmental audio continuously, alerting users through visual or haptic notifications when specific sounds occur. This system can detect and notify users about smoke alarms, doorbell rings, baby crying, running water, or various household appliances. Advanced users can train the system to recognize custom sounds specific to their environment, creating personalized alert systems that enhance safety and awareness.

Audio Visualization features provide visual representations of audio information through LED flash alerts and screen-based notifications. These features ensure that users who cannot hear audio alerts remain informed about incoming calls, messages, and system notifications. Customizable flash patterns and visual alert styles allow users to differentiate between various types of notifications without requiring audio perception.

Headphone Accommodations represent sophisticated audio processing that adapts sound output to individual hearing characteristics. The system can amplify soft sounds, adjust frequency responses, and enhance speech clarity based on user-specific hearing profiles. These accommodations work with any connected headphones, transforming standard audio devices into personalized hearing assistance tools.

Closed Captions and subtitles support extends beyond simple text display to include comprehensive customization options that address various reading and processing needs. Users can adjust text size, font selection, background opacity, and color schemes to optimize subtitle visibility and comprehension. The system supports multiple caption formats and automatically enables captions when available across supported applications and media content.

Cognitive and Learning Support: Enhancing Mental Processing

Guided Access creates focused learning and task completion environments by temporarily restricting iPhone functionality to specific applications or screen areas. This feature proves invaluable for users with attention difficulties, autism spectrum conditions, or cognitive disabilities who benefit from reduced distraction and simplified interface interactions. Advanced Guided Access configurations allow instructors, caregivers, or users themselves to disable specific screen regions, limit time-on-task, or prevent access to certain application features.

The power of Guided Access extends to educational and therapeutic applications where maintaining focus on specific tasks enhances learning outcomes. Teachers can configure devices for standardized testing environments, while therapists can create controlled interaction spaces that support skill development. The system includes options for automatic session ending, passcode protection for configuration changes, and detailed usage reporting that helps track engagement and progress.

Spoken Content features serve dual purposes by supporting both vision accessibility and cognitive processing needs. For users with dyslexia, processing difficulties, or attention challenges, having text read aloud while simultaneously viewing written content enhances comprehension and retention. The system provides adjustable reading speeds, voice selection, and highlighting options that follow along with spoken text, creating multi-modal learning experiences that accommodate diverse processing styles.

Background Sounds provide environmental audio that can improve focus, reduce anxiety, or mask distracting environmental noise. These professionally recorded soundscapes include options like rain, ocean waves, or white noise that can be played continuously in the background while using other applications. Volume mixing options ensure that background sounds complement rather than interfere with other audio content, while automatic adjustment features adapt volume levels based on environmental noise conditions.

Attention-based features leverage the iPhone’s sophisticated sensor array to enhance interaction experiences for users with cognitive differences. Face ID attention awareness can be configured to provide additional confirmation time for users who process visual information more slowly, while screen attention awareness prevents automatic screen dimming when users are looking at the device but not actively touching it.

| Feature Category | Primary Benefits | User Groups Served | Integration Level |

| Vision Support | Screen reading, magnification, visual enhancement | Blind, low vision, color vision differences | Complete system integration |

| Motor Support | Alternative input methods, gesture customization | Limited mobility, dexterity challenges | Hardware and software adaptation |

| Hearing Support | Audio amplification, visual alerts, sound processing | Deaf, hard of hearing, auditory processing | Audio system optimization |

| Cognitive Support | Focus enhancement, distraction reduction, processing aids | Learning differences, attention challenges | Application and system-wide |

Advanced Accessibility Integration and Customization

The Accessibility Shortcut represents perhaps the most important feature for regular accessibility users, providing instant access to frequently used features through triple-clicking the side button or home button. This shortcut system can be configured to activate single features immediately or present a menu of multiple accessibility options for quick selection. The customization options accommodate users who rely on multiple accessibility features simultaneously while ensuring that activation remains simple and reliable.

Per-App Settings demonstrate Apple’s commitment to granular accessibility control by allowing different accessibility configurations for individual applications. Users can enable VoiceOver for reading applications while disabling it for games, or activate Switch Control only for specific productivity apps while maintaining standard touch interaction for others. This sophisticated approach recognizes that accessibility needs often vary depending on the task being performed and the application being used.

Accessibility API integration ensures that third-party applications can leverage iPhone accessibility features effectively. Developers who follow accessibility guidelines create applications that work seamlessly with VoiceOver, Switch Control, and other assistive technologies. This ecosystem approach means that accessibility benefits extend beyond Apple’s own applications to encompass the entire iOS app environment.

Family and Caregiver Support features recognize that many iPhone users require assistance with device configuration and management. Accessibility settings can be configured remotely through Family Sharing, while Screen Time restrictions can prevent accidental changes to critical accessibility configurations. Emergency SOS integration ensures that users with communication difficulties can still access emergency services through simplified activation methods.

Device Interoperability extends iPhone accessibility benefits to the broader Apple ecosystem. VoiceOver gestures learned on iPhone translate directly to iPad and Mac usage, while Apple Watch integration provides additional interaction methods for users with motor impairments. AirPods integration enhances hearing accessibility features across all Apple devices, creating seamless accessibility experiences that adapt to user location and context.

| Accessibility Feature | Activation Method | Customization Options | Compatible Apps |

| VoiceOver | Settings or Triple-Click | Voice, speed, verbosity, gesture customization | All iOS apps with proper accessibility implementation |

| Switch Control | Settings or Triple-Click | Switch assignment, timing, scanning patterns | Universal system control |

| AssistiveTouch | Settings or Triple-Click | Menu customization, gesture recording, device controls | System-wide functionality |

| Voice Control | Settings or Voice Command | Language, vocabulary, command customization | Complete device operation |

Professional and Educational Applications

Educational institutions have embraced iPhone accessibility features as fundamental tools for inclusive learning environments. VoiceOver enables students with visual impairments to participate fully in digital learning activities, while Guided Access creates focused testing environments that accommodate students with attention difficulties. The combination of these features with standard educational applications creates comprehensive learning platforms that serve diverse student populations without requiring specialized equipment or software.

iphone-release.com

iphone-release.com